Show and Tell, Not Just Tell: How Lean Practitioners Are Learning to Partner with AI

- Eric Olsen

- Mar 31

- 3 min read

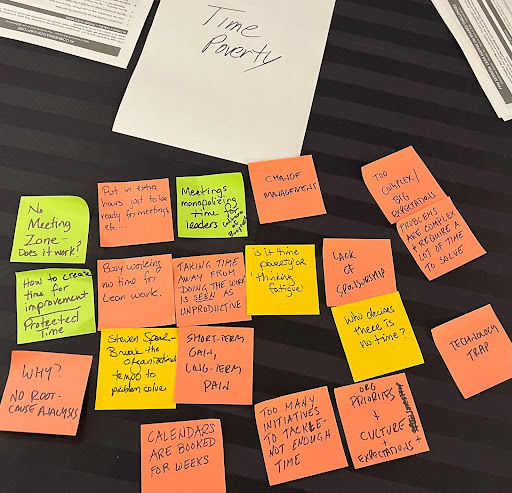

This is Part 4 of a four-part series documenting practitioner conversations from the Future of People at Work Lean Coffee session at the LEI Lean Summit in Houston, March 13, 2026. Each post focuses on one of the four cross-initiative themes the community explored: succession planning, time poverty, organizational silos, and AI integration.

At the LEI Lean Summit in Houston, the AI integration breakout table did something worth noting: they used the Lean Coffee method to discuss AI. Sticky notes. Dot voting. Time-boxed topics. The irony was intentional—and it surfaced something important about how the lean community is learning to approach this technology.

The Gateway: Meeting Notes

The group’s central insight on education was simple: “Show and tell is more important than the tell.” People don’t learn AI by being told to try it. They learn by watching someone walk through a prompt, ask a question, and improve the output. One participant described showing clinical staff with no AI experience how to use it for meeting notes—a low-stakes entry point that built comfort for more advanced use. Using AI for meeting summaries, the group found, makes project managers “better facilitators” by freeing them from splitting attention between note-taking and leading conversation. It also lowers the cognitive load for complex data discussions. The lesson on trust was iterative: early AI notes on WebEx were poor, but Teams produced high accuracy. Don’t trust AI immediately—commit to running the experiment and correcting over time.

Humans at Every Decision Point

The group refined the common “human in the loop” principle: verification isn’t just needed at the end, but at every customer-impacting decision point. They framed this as “trust but verify”—the human remains responsible for understanding what AI output means. And they were clear: don’t implement an AI solution without first understanding the problem. If a process owner doesn’t understand the current workflow, AI won’t fix it—it will amplify the issues.

Replicating the Expert, Not Replacing the Human

A “department of one” CI practitioner described training AI agents as automated coaches using curated materials—the goal being to “put me in their pocket” so others aren’t dependent on a single human expert. When someone raised the fear of replacing yourself, the response was grounding: “Our advantage is always going to be our community and the fact that we see nonverbal cues and connect with people.”

Online, Colleen Soppelsa coined a phrase that captured the room: “PDCA—Plan, Do, Check, and Abandon.” Organizations try AI once, it doesn’t work perfectly, and they quit. Chirag Bhatt drew parallels to Industry 3.0 automation. The group proposed using A3 logic frameworks for AI proposals—connecting AI governance directly to the lean thinking tools practitioners already know.

Knowledge Map: Connecting to Your Context

Process Keywords: AI-assisted documentation, show-and-tell education, human-in-the-loop governance, trust-but-verify methodology, meeting notes as gateway, AI agent development, iterative trust-building, PDCA for AI experimentation

Context Keywords: AI adoption fear, governance gaps, tool stigma, cognitive load, department-of-one constraints, automation lessons, data quality concerns, experimentation resilience

Application Triggers:

Encountering AI resistance → “Show and tell > tell”: walk people through prompts, start with meeting notes

Unclear AI governance → “Trust but verify at every decision point” and A3 frameworks for proposals

Giving up after first AI failure → Recognize “PDCA and Abandon” pattern; commit to iterative experiments

Fear of AI replacing CI practitioners → “Our advantage is community and nonverbal cues”—AI extends, not replaces

Related Continual Improvement Themes: Scientific thinking, respect for people, standard work, continuous improvement, knowledge transfer, organizational learning, process clarity before automation

This post was developed from practitioner conversations at the LEI Lean Summit Lean Coffee session (March 13, 2026), with AI integration breakout transcript, Zoom breakout recording from Sally Gatlin, and synthesized with Claude.AI assistance. Editorial contributions by Eric Olsen, Rachel Reuter, Colleen Soppelsa, and Dave Ostreicher. It represents ongoing work by the Future of People at Work initiative, a collaboration of Catalysis, Central Coast Lean, GBMP Consulting Group, Imagining Excellence, Lean Enterprise Institute, Shingo Institute, The Ohio State University Center for Operational Excellence, Toyota Production System Support Center (TSSC), and University of Kentucky Pigman College of Engineering.

Continue the conversation:

Join our monthly community gatherings: https://fpwork.org

Miro Board: https://miro.com/app/board/uXjVKm4rtGI=/?moveToWidget=3458764664033400655&cot=14

People to Connect With: @Colleen Soppelsa @Chirag Bhatt @Bruce Hamilton @Rachel Reuter @Eric O. Olsen @Kelly Reo

Comments